- About us

- Contact us: +1.641.472.4480, hfi@humanfactors.com

Cool stuff and UX resources

Introduction

In the youthful field of usability, we may be surprised to hear about "venerable" measures of usability. However, that's just what we have with the DEC "System Usability Scale" (SUS) copyrighted in 1986 and publicly discussed in 1996 by John Brooke.

Say you are tackling a vexing task for redesign. Would you like to get a baseline questionnaire measure of subjective "ease of use" on the original design? And then would you like to compare your redesign with a follow-up measure (to see if the design works better)?

Do you want a questionnaire to be fast, fast, and fast (otherwise known as "Quick and Dirty")?

Of course.

Time is money

And that's what motivated John Brooke and colleagues to invent a ten-question assessment that takes about 90 seconds to fill out. In fact, it's so easy your test participants could fill it out several times during a longer usability test session.

"Quick and (not so) dirty" means you can get data that measures user-friendliness by task! Plus you can compare your design progress over time!

But wait, how do we compare our design with the larger "community" of designs out there? We know that our end-users interact with many user interface designs. How does ours compare?

Wouldn't it be nice to see whether our design is just "OK" versus "good," "excellent," or "best imaginable"?

Put another way, wouldn't it be nice to know...

- Whether our user groups give our design a "C", "B," or an "A"? Get a grade!

- How well our web design encourages visitors to come back to our site? That is, how well does the design support a web site "loyalty program"? Get repeat visitors!

Let's cover two studies that answer both of these hard and practical questions.

Making the grade with your SUS metric

I've read a lot of research. Because of their visionary efforts across ten years, my hat goes off to the team of three researchers who systematically collected almost 3,500 results of SUS surveys over those ten years. The team consists of two gentlemen from AT&T Labs, Aaron Bangor and James Miller, and a professor at Rice University, Philip Kortun.

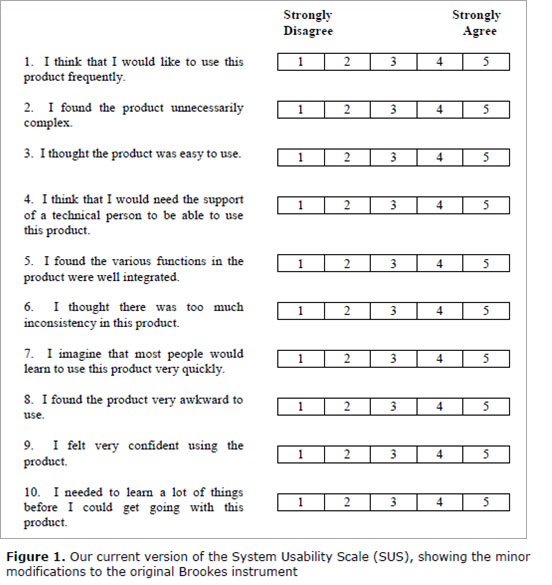

They used the SUS survey shown here across six different interface design contexts: Web (41% of the 3,500 responses), Cell phone (17%), IVR (Interactive Voice Response) (17%), GUI (Graphical User Interface) (7%), Hardware (7%), and Television (5%) interface products. (This graphic comes from their published study.)

See Brooke, J. (1996) for scoring methodology and the original text. (Our Bangor et al researchers found that the word "awkward" in statement 8 worked better than the original word "cumbersome". They also use the word "product" instead of "system".)

Mirror, mirror on the wall, who's the fairest of us all?

Our authors asked an important question, which affects us all.

What does a specific SUS score mean in describing the usability of a product?

Do you need a score of 50 (out of 100) to say that a product is usable, or do you need a score of 75 or 100?

The first part of their answer was to look at the distribution of scores across the 3,463 questionnaire results contained within their 273 studies.

- Half the 3, 463 scores were above 70 and half were below. That is, the median was a score of 70.

- The top 25% of the scores averaged for each study measured 77.8 and above.

So now we have a sense of the middle score (70) and the average score for the top 25% of studies (scores ~78 and above).

Does the idea of your design falling in the top 25% of studies give you a feeling for "good"?

Well, we hope that the top 25% must have some value. But we need more evidence.

We could look at other studies. For example, Tullis and Albert (2008) show that an SUS score of 81.2 puts you in the top 10% of their particular sample of 129 studies. So, we have a second snapshot of quality – the top 10% of another group of SUS surveys.

Pop the question to seal the deal

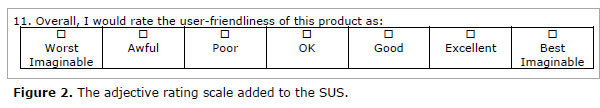

But even better, our three authors analyzed a final, single question added to 959 of their recent SUS questionnaires. Participants picked one of these adjectives after answering the SUS questions.

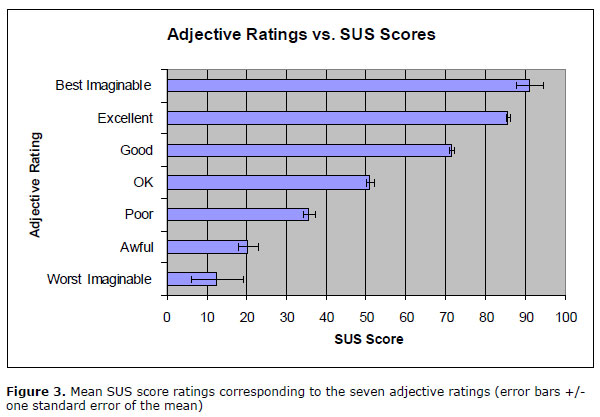

Here's how the SUS scores matched the adjectives (all graphics come from the study publication):

So now you have some adjectives to include in your report. You can give the score, and this chart shows you which adjective matches the score.

Do it yourself, too; and make the grade

Better yet, include the adjectives with your own SUS questionnaire. Let your participants give you an overall evaluation directly. See how closely your average SUS results match the chart given above.

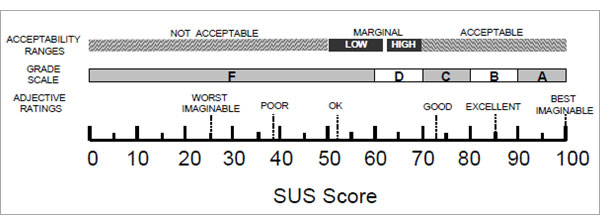

Which leads us to how you can give a "grade" to your design.

Our three authors make a speculation. It goes like this.

The traditional school grading scale uses numeric scores that represent the percentage a student has earned out of the total possible score. Remember?

A score of 70% to 79% on a test got you a "C". A score of 80 % to 89% got you a "B" and a score of 90% or more got you an "A". (You never got a "D" or an "F", right?)

See what our authors propose in the context of matching their adjective results with a proposed "grading vocabulary". Does it make sense to you? It sure makes sense to me.

Extending your SUS scores to "loyalty"

Recall our second goal for usability metrics: to determine "how well our web design encourages visitors to come back to our site". This refers to site "loyalty".

For this we turn to another active web researcher, Jeff Sauro, magi of www.measuringusability.com. Sauro (2009) recently published in his newsletter the results of his study somewhat similar to the adjective study above.

Sauro examined SUS data from 146 participants tested in a dozen venues such as rental car companies, financial applications, and websites like Amazon.com.

In addition to the usual SUS queries, test participants got one extra question: "How likely are you to recommend this product to a friend or colleague?"

This extra question, turns out, has quite a pedigree. It gives a "Net Promoter Score" (NPS). Some authors report this one question offers good prediction of long term growth for a company.

Upon correlating scores from the NPS with overall results of the SUS, Sauro found that the SUS explains about 36% of the variability in the Net Promoter Scores.

He points out that people identified as a "Promoter" have an average SUS score of 82 (plus or minus about five points). So, if you want your web site to serve as a beacon for loyal customers ("promoters"), strive for an SUS score above 80.

Recall that earlier in this article, an SUS score of 80 gets a "B" reflecting roughly the midpoint between the adjective phrases "Good" and "Excellent" (see Figure 3 above).

So making the grade of "B" also gets you some customer loyalty. Not bad.

SUS-tainability: the gist of it all

Here are a few points to help you attain SUS at-one-ment.

- The SUS questionnaire offers you a standard measurement tool for assessing your designs.

- You can compare your results against results from other usability tests using adjectives like OK, Good, Excellent, and Best Imaginable.

- You can assign a letter grade to your test results and share that with your colleagues.

- You can even say your web site promotes loyalty (return visits) for participants who score above 80. (My November, 2009 HFI Newsletter about "cognitive lock-in" gives further evidence on usability as the missing link to customer loyalty.)

- The SUS questionnaire has no fees. You may use it freely.

- It's short, sweet, quick, and (not so) dirty! (See Tullis and Stetson, 2004, if you have absolutely any doubts about this.)

Check out the references below for details. I've used the SUS many times. It helps you communicate to any client the overall subjective response of usability test participants.

If user experience is important to you, then the SUS gives voice to the experience of your users.

References

Bangor, Aaron, Kortun, Philip, and Miller, James, 2009. Determining What Individual SUS Scores Mean: Adding an Adjective Rating Scale. Journal of Usability Studies, 4 (3) May 2009, 114-123.

Brooke, John, 1996. SUS: A "quick and dirty" usability scale. In P. W. Jordan, B. Thomas, B. A. Weerdmeester, & I. L. McClelland (Eds.), Usability evaluation in industry (pp. 189–194). London: Taylor & Francis.

Sauro, Jeff, 2009. Does better usability increase customer loyalty? The Net Promoter Score and the System Usability Scale (SUS). 7 Jan 2010.

Sorflaten, John, 2009. Wherefore Art Thou O Usability? – Cognitive lock-in to the rescue. HFI Newsletter, Nov, 2009.

Tullis, T. S. & Stetson, J. N. (2004, June 7-11). A Comparison of Questionnaires for Assessing Website Usability, Usability Professionals Association (UPA) 2004 Conference, Minneapolis, USA.

Tullis, T. S. & Albert, B. (2008, May 28). Tips and Tricks for Measuring the User Experience. Usability and User Experience 2008 UPA-Boston's Seventh Annual Mini UPA Conference, Boston, USA.

Message from the CEO, Dr. Eric Schaffer — The Pragmatic Ergonomist

Leave a comment here

Reader comments

Mary M. Couse

Government of Canada

If you run SUS as an online survey, how do you ensure that you get a representative sampling? How do you know that the results would not be skewed by a preponderance of responses from disgruntled or thrilled users?

Ken Gaylin

AT&T

Thanks very much. That last UI Design Newsletter (January 2010) regarding the SUS was great. I will try to use it as soon as an opportunity arises.

Gabe Biller

I don't know much about metrics around usability or UX, and I'm trying to use the SUS scale for the first time right now. My question: Does using a 7-pt Likert scale instead of a 5-pt one make a big difference in the "results"?

J. Femia

Opto 22

I must be missing something here. Since half the questions are negative and half positive, a score of 80 would indicate the respondent is very ambivalent about the product, not that it's a good product. If I love the product and "strongly agree" with questions 1, 3, 5, 7, and 9 (score 25), then I will "strongly disagree" with questions 2, 4, 6, 8, and 10 (score 5; total SUS score 30). I'd get the same score if I hated the product and reversed my answers. Would you please explain? Thanks.

Joshua Scott

U.S. Army

Thanks again for the valuable info by using that 11th question. Just to let you know, i used the previous test results as a baseline of usability (63%) and ran another survey using only 2 question (part of the appeal). After conducting the survey with two questions - one asking to rate usability and another optional open ended question asking how we can make the site better - i was able to conclude, we increased usability by 10% after the redesign.

Sergey Sinyakov, CUA, CXA

SUS definitely did not loose its relevance. It is quite easy to run the test itself but a bit more complicated to analyze the data. Recently we developed an online tool that we called SUS+, because in addition to traditional SUS questions it included an option for calculating NPS (Net Promoter Score). Here are couple of demo links of how it works. The first one is a participants’ page and the second is a stripped-down version of the analytic tools

Jeff Zee

Pretty good article, but alas, the author falls into the common trap of interpreting the SUS as an objective measure of usability. He starts off correctly by asking, “Would you like to get a baseline questionnaire measure of subjective “ease of use ” on the original design?” but then slips later by asking, “Do you need a score of 50 (out of 100) to say that a product is usable, or do you need a score of 75 or 100?”

The SUS cannot tell you whether a product is usable or not – it can only tell you how users subjectively perceive it. Anyone who has ever run a usability study knows that perception and reality are often not the same (users don't realize they made errors, etc.). Bangor, et al., point this out in their article. This misunderstanding of what the SUS is and does leads individuals to make unfounded conclusions such as the commenter above who claims to have “increased usability by 10%.” Lastly, to address one of the commenter questions above, calculating the SUS is not a straightforward process of simply adding the individual scores together. The negative scales have their scores reversed and all scales are converted to a 0-4 scale. There are calculators out there that allow you to simply plug in your raw scores to calculate the overall score.

Subscribe

Sign up to get our Newsletter delivered straight to your inbox

Privacy policy

Reviewed: 18 Mar 2014

This Privacy Policy governs the manner in which Human Factors International, Inc., an Iowa corporation (“HFI”) collects, uses, maintains and discloses information collected from users (each, a “User”) of its humanfactors.com website and any derivative or affiliated websites on which this Privacy Policy is posted (collectively, the “Website”). HFI reserves the right, at its discretion, to change, modify, add or remove portions of this Privacy Policy at any time by posting such changes to this page. You understand that you have the affirmative obligation to check this Privacy Policy periodically for changes, and you hereby agree to periodically review this Privacy Policy for such changes. The continued use of the Website following the posting of changes to this Privacy Policy constitutes an acceptance of those changes.

Cookies

HFI may use “cookies” or “web beacons” to track how Users use the Website. A cookie is a piece of software that a web server can store on Users’ PCs and use to identify Users should they visit the Website again. Users may adjust their web browser software if they do not wish to accept cookies. To withdraw your consent after accepting a cookie, delete the cookie from your computer.

Privacy

HFI believes that every User should know how it utilizes the information collected from Users. The Website is not directed at children under 13 years of age, and HFI does not knowingly collect personally identifiable information from children under 13 years of age online. Please note that the Website may contain links to other websites. These linked sites may not be operated or controlled by HFI. HFI is not responsible for the privacy practices of these or any other websites, and you access these websites entirely at your own risk. HFI recommends that you review the privacy practices of any other websites that you choose to visit.

HFI is based, and this website is hosted, in the United States of America. If User is from the European Union or other regions of the world with laws governing data collection and use that may differ from U.S. law and User is registering an account on the Website, visiting the Website, purchasing products or services from HFI or the Website, or otherwise using the Website, please note that any personally identifiable information that User provides to HFI will be transferred to the United States. Any such personally identifiable information provided will be processed and stored in the United States by HFI or a service provider acting on its behalf. By providing your personally identifiable information, User hereby specifically and expressly consents to such transfer and processing and the uses and disclosures set forth herein.

In the course of its business, HFI may perform expert reviews, usability testing, and other consulting work where personal privacy is a concern. HFI believes in the importance of protecting personal information, and may use measures to provide this protection, including, but not limited to, using consent forms for participants or “dummy” test data.

The Information HFI Collects

Users browsing the Website without registering an account or affirmatively providing personally identifiable information to HFI do so anonymously. Otherwise, HFI may collect personally identifiable information from Users in a variety of ways. Personally identifiable information may include, without limitation, (i)contact data (such as a User’s name, mailing and e-mail addresses, and phone number); (ii)demographic data (such as a User’s zip code, age and income); (iii) financial information collected to process purchases made from HFI via the Website or otherwise (such as credit card, debit card or other payment information); (iv) other information requested during the account registration process; and (v) other information requested by our service vendors in order to provide their services. If a User communicates with HFI by e-mail or otherwise, posts messages to any forums, completes online forms, surveys or entries or otherwise interacts with or uses the features on the Website, any information provided in such communications may be collected by HFI. HFI may also collect information about how Users use the Website, for example, by tracking the number of unique views received by the pages of the Website, or the domains and IP addresses from which Users originate. While not all of the information that HFI collects from Users is personally identifiable, it may be associated with personally identifiable information that Users provide HFI through the Website or otherwise. HFI may provide ways that the User can opt out of receiving certain information from HFI. If the User opts out of certain services, User information may still be collected for those services to which the User elects to subscribe. For those elected services, this Privacy Policy will apply.

How HFI Uses Information

HFI may use personally identifiable information collected through the Website for the specific purposes for which the information was collected, to process purchases and sales of products or services offered via the Website if any, to contact Users regarding products and services offered by HFI, its parent, subsidiary and other related companies in order to otherwise to enhance Users’ experience with HFI. HFI may also use information collected through the Website for research regarding the effectiveness of the Website and the business planning, marketing, advertising and sales efforts of HFI. HFI does not sell any User information under any circumstances.

Disclosure of Information

HFI may disclose personally identifiable information collected from Users to its parent, subsidiary and other related companies to use the information for the purposes outlined above, as necessary to provide the services offered by HFI and to provide the Website itself, and for the specific purposes for which the information was collected. HFI may disclose personally identifiable information at the request of law enforcement or governmental agencies or in response to subpoenas, court orders or other legal process, to establish, protect or exercise HFI’s legal or other rights or to defend against a legal claim or as otherwise required or allowed by law. HFI may disclose personally identifiable information in order to protect the rights, property or safety of a User or any other person. HFI may disclose personally identifiable information to investigate or prevent a violation by User of any contractual or other relationship with HFI or the perpetration of any illegal or harmful activity. HFI may also disclose aggregate, anonymous data based on information collected from Users to investors and potential partners. Finally, HFI may disclose or transfer personally identifiable information collected from Users in connection with or in contemplation of a sale of its assets or business or a merger, consolidation or other reorganization of its business.

Personal Information as Provided by User

If a User includes such User’s personally identifiable information as part of the User posting to the Website, such information may be made available to any parties using the Website. HFI does not edit or otherwise remove such information from User information before it is posted on the Website. If a User does not wish to have such User’s personally identifiable information made available in this manner, such User must remove any such information before posting. HFI is not liable for any damages caused or incurred due to personally identifiable information made available in the foregoing manners. For example, a User posts on an HFI-administered forum would be considered Personal Information as provided by User and subject to the terms of this section.

Security of Information

Information about Users that is maintained on HFI’s systems or those of its service providers is protected using industry standard security measures. However, no security measures are perfect or impenetrable, and HFI cannot guarantee that the information submitted to, maintained on or transmitted from its systems will be completely secure. HFI is not responsible for the circumvention of any privacy settings or security measures relating to the Website by any Users or third parties.

Correcting, Updating, Accessing or Removing Personal Information

If a User’s personally identifiable information changes, or if a User no longer desires to receive non-account specific information from HFI, HFI will endeavor to provide a way to correct, update and/or remove that User’s previously-provided personal data. This can be done by emailing a request to HFI at hfi@humanfactors.com. Additionally, you may request access to the personally identifiable information as collected by HFI by sending a request to HFI as set forth above. Please note that in certain circumstances, HFI may not be able to completely remove a User’s information from its systems. For example, HFI may retain a User’s personal information for legitimate business purposes, if it may be necessary to prevent fraud or future abuse, for account recovery purposes, if required by law or as retained in HFI’s data backup systems or cached or archived pages. All retained personally identifiable information will continue to be subject to the terms of the Privacy Policy to which the User has previously agreed.

Contacting HFI

If you have any questions or comments about this Privacy Policy, you may contact HFI via any of the following methods:

Human Factors International, Inc.

PO Box 2020

1680 highway 1, STE 3600

Fairfield IA 52556

hfi@humanfactors.com

(800) 242-4480

Terms and Conditions for Public Training Courses

Reviewed: 18 Mar 2014

Cancellation of Course by HFI

HFI reserves the right to cancel any course up to 14 (fourteen) days prior to the first day of the course. Registrants will be promptly notified and will receive a full refund or be transferred to the equivalent class of their choice within a 12-month period. HFI is not responsible for travel expenses or any costs that may be incurred as a result of cancellations.

Cancellation of Course by Participants (All regions except India)

$100 processing fee if cancelling within two weeks of course start date.

Cancellation / Transfer by Participants (India)

4 Pack + Exam registration: Rs. 10,000 per participant processing fee (to be paid by the participant) if cancelling or transferring the course (4 Pack-CUA/CXA) registration before three weeks from the course start date. No refund or carry forward of the course fees if cancelling or transferring the course registration within three weeks before the course start date.

Cancellation / Transfer by Participants (Online Courses)

$100 processing fee if cancelling within two weeks of course start date. No cancellations or refunds less than two weeks prior to the first course start date.

Individual Modules: Rs. 3,000 per participant ‘per module’ processing fee (to be paid by the participant) if cancelling or transferring the course (any Individual HFI course) registration before three weeks from the course start date. No refund or carry forward of the course fees if cancelling or transferring the course registration within three weeks before the course start date.

Exam: Rs. 3,000 per participant processing fee (to be paid by the participant) if cancelling or transferring the pre agreed CUA/CXA exam date before three weeks from the examination date. No refund or carry forward of the exam fees if requesting/cancelling or transferring the CUA/CXA exam within three weeks before the examination date.

No Recording Permitted

There will be no audio or video recording allowed in class. Students who have any disability that might affect their performance in this class are encouraged to speak with the instructor at the beginning of the class.

Course Materials Copyright

The course and training materials and all other handouts provided by HFI during the course are published, copyrighted works proprietary and owned exclusively by HFI. The course participant does not acquire title nor ownership rights in any of these materials. Further the course participant agrees not to reproduce, modify, and/or convert to electronic format (i.e., softcopy) any of the materials received from or provided by HFI. The materials provided in the class are for the sole use of the class participant. HFI does not provide the materials in electronic format to the participants in public or onsite courses.